5 Recruit participants strategically

Researchers have many options for collecting data online, each better suited to certain research questions. Below, we outline key considerations for developing a clear recruitment plan tailored to different recruitment goals.

5.1 Choose the most suitable participant pool

5.1.1 I want data immediately.

Dedicated participant platforms like Prolific and CloudResearch Connect, where participants are financially compensated, are generally the fastest way to recruit. These services provide a steady stream of participants, allowing data collection from hundreds of individuals within mere hours. By contrast, recruitment through citizen science platforms, such as TestMyBrain.org and LabintheWhile.org, or through social media is slower and less predictable (Reinecke and Gajos 2015), with studies often staying open for months and participation arriving in bursts via media coverage or outreach efforts (Hilton 2024).

5.1.2 I want large sample sizes at minimal costs.

If your goal is to collect large samples at minimal cost, citizen science platforms offer a potential solution. Unlike dedicated participant pools, which require financial compensation, citizen science approaches can generate massive datasets with little to no cost. Instead, participants are rewarded with personalized performance feedback accompanied by explanations of the psychological principles under study (Gajos et al. 2020; Reinecke and Gajos 2015; Tsay et al. 2024). The key here is to spark curiosity: draw participants in with an engaging ad, keep tasks brief and fun, and provide meaningful feedback that encourages future participation. Notable examples include Samuel Mehr’s Music Lab (themusiclab.org), which has attracted millions to discover their “musical IQ” (Liu et al. 2023), and “Is My Blue Your Blue?” (ismy.blue), where people learn about their ability to perceive colors.

5.1.3 I want a diverse sample.

Online samples are typically more diverse and representative than those recruited in traditional laboratory studies (Casey et al. 2017; Douglas, Ewell, and Brauer 2023; Paolacci and Chandler 2014; Peer et al. 2017). Because these platforms have a low barrier to entry – requiring minimal registration or screening, and no need to be co-located with the experimenter – they enable access to wider, more inclusive samples. Prior studies using such approaches have recruited native speakers of 54 languages (Liu et al. 2023), participants from 200 countries (Coutrot et al. 2018), and individuals spanning the human lifespan (Hartshorne and Germine 2015).

5.1.4 I want specific populations.

When targeting specific populations, most recruitment services allow researchers to choose participants based on demographics such as age, gender, profession, or hobbies. Custom screening can also be achieved by administering questionnaires prior to the experiment to identify individuals meeting desired criteria; this enables access to traditionally hard-to-reach groups, such as those with specific (and sometimes rare) disabilities (Smith et al. 2015). Citizen science via social media broadens these opportunities further, for example through targeted advertising to specific communities.

For recruiting patient groups, we recommend recruiting directly through clinicians or partnering with established registries. Registries are usually condition-specific, for example among those with Parkinson’s disease (Kim et al. 2018), Cerebral Palsy (Gross et al. 2020), or those with stroke (Durisko et al. 2016). Notably, an advantage of online neuropsychological studies is the ability to recruit tightly matched control participants; each patient can be treated as the reference case, with demographic filters—age, sex/gender, handedness, education, and even device type on platforms like Prolific—used to recruit a one-to-one matched control participant.

However, easier online access to diverse demographics does not guarantee representativeness. Online samples are often skewed toward individuals with higher cognitive abilities, “super-agers,” those curious about online tasks, and those who are more tech-savvy (Ogletree and Katz 2021; Spiers, Coutrot, and Hornberger 2023). And although they tend to be more diverse than the typical college sample, these cohorts remain skewed and WEIRD (Paolacci and Chandler 2014). Researchers must, therefore, remain vigilant about these selection biases when interpreting their findings.

5.2 Recruit from reliable participant pools

When using a dedicated participant pool, we recommend selecting a reputable platform and restricting participation to individuals with high approval ratings. While Amazon’s Mechanical Turk has been widely used for behavioral research (Casler, Bickel, and Hackett 2013; Crump, McDonnell, and Gureckis 2013), there has been a steady decline in data quality on this platform (Chmielewski and Kucker 2020; Kay 2025; Kennedy et al. 2020).In contrast, platforms built for research, such as Prolific and CloudResearch Connect, have consistently delivered higher-quality data (Albert and Smilek 2023; Douglas, Ewell, and Brauer 2023; Peer et al. 2021).

5.3 Recruit at consistent times of day

Participant demographics on recruitment platforms can vary by time of day (Casey et al. 2017; Moss 2019); compounding this, behavior can also be influenced by daily circadian rhythms and annual academic cycles (Keller et al. 2025; Munnilari et al. 2024; Schmidt et al. 2007). To minimize this variability, we recommend recruiting participants at consistent times whenever possible, which is often more practical in online compared to in-lab settings.

5.4 Solicit feedback in small batches

When launching an online experiment, start with a small batch of participants and use their written feedback to iteratively improve clarity and usability. For example, if your target sample size is 50, begin with five participants, run quality checks, and refine the study before scaling up. This staggered approach helps catch implementation errors early on, paving the way for a smoother, more polished experiment.

5.5 The principle in action

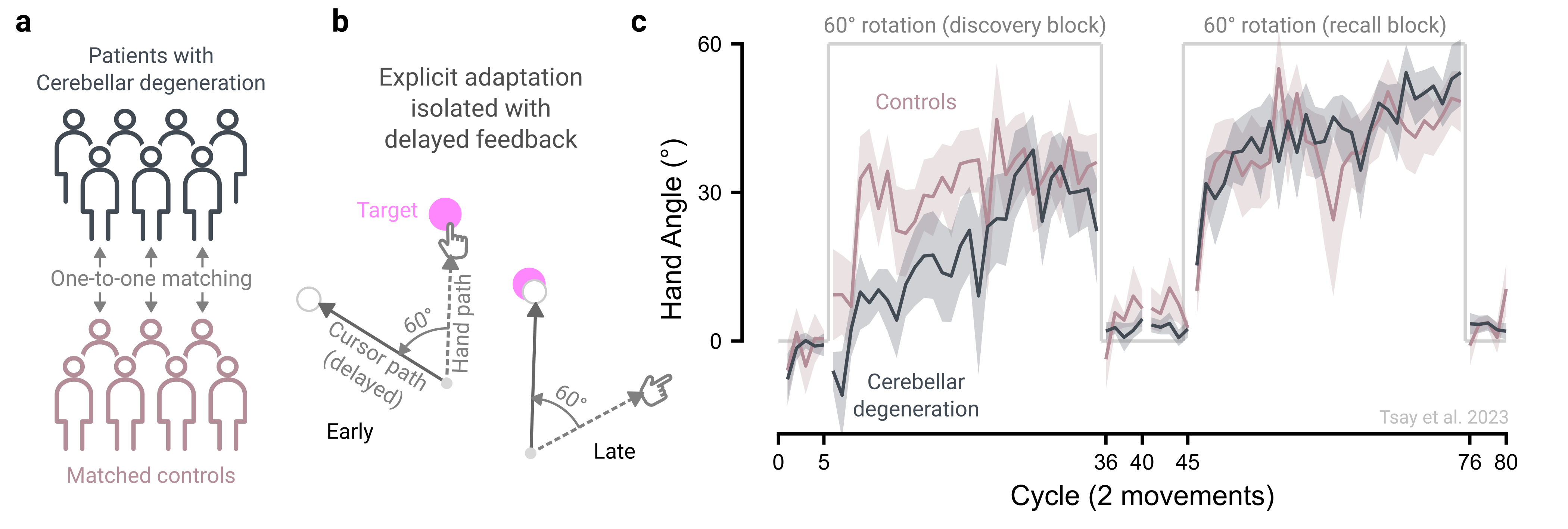

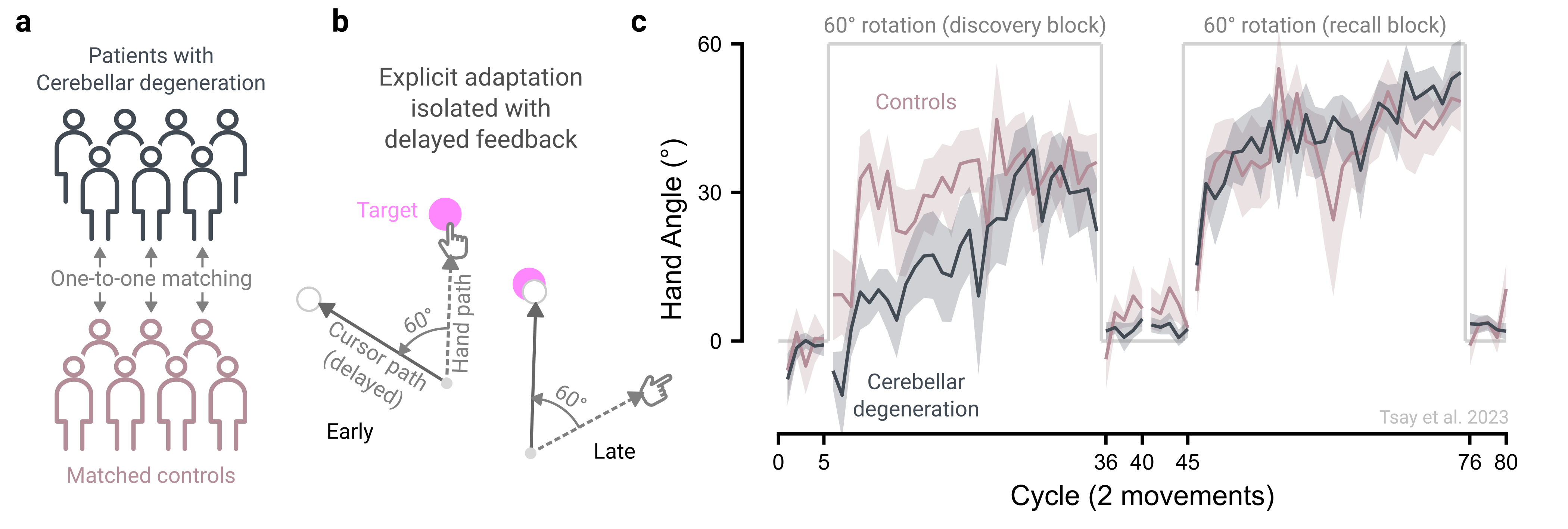

Decades of laboratory research have shown that patients with cerebellar degeneration are impaired in implicit processes governing motor adaptation (Morehead et al. 2017; Tseng et al. 2007). That is, patients with cerebellar degeneration exhibit smaller aftereffects than age-matched controls. More recently, the cerebellum has also been implicated in higher-level cognitive functions, such as decision-making, working memory, and numerical cognition, raising the question of whether it also contributes to the explicit re-aiming strategies that underlie motor adaptation (see reviews: Schmahmann (2019); Tsay and Ivry (2025)).

To directly test this possibility, we compared patients with cerebellar degeneration to matched controls in an online visuomotor rotation task that isolates explicit re-aiming (Figure 5.1a-b; Tsay, Schuck, and Ivry (2023)). Online testing removed the logistical barriers of bringing a globally distributed patient cohort into the lab using our patient registry. It also enabled one-to-one matching of patients with controls recruited via Prolific. The results revealed an unexpected dissociation: patients with cerebellar degeneration were impaired at discovering an effective re-aiming strategy early in learnig to counteract the imposed visuomotor rotation but were just as capable as controls at recalling a learned strategy. These online results thus reveal a novel role for the cerebellum in strategy discovery during motor adaptation, consistent with its broader contributions to cognitive function (Figure 5.1c).