1 Maximize the advantages of online crowdsourcing

The convenience of online testing offers many advantages for both researchers and participants. It not only accelerates data collection but also enables experiments to be run concurrently at a scale rarely achievable in the lab. Moreover, online platforms make it possible to recruit populations that are less easily accessible in traditional lab-based research.

1.1 Advantage 1: Designs with many participants

Ready access to crowdsourced samples that can be tested in parallel can enable experiments to be conducted on an unprecedented scale (Reips 2021). Such large-scale studies offer many unique opportunities: they provide robust evidence for small effects (Tsay, Asmerian, et al. 2024); allow multiple moderating variables to be tested concurrently (Coutrot et al. 2018); and enable researchers to assess how broadly effects generalize across diverse groups (Liu et al. 2023).

Concurrent participation introduces a powerful invariance to study design: recruiting 200 participants to each complete 20 conditions requires no more effort than recruiting 20 participants to complete 200 conditions each. This “many-participants few-trials” design, impractical in the lab, enables researchers to test generalizability across massive stimulus sets, such as evaluating a host of decision-making models on 10,000 risky gambles (Peterson et al. 2021) or assessing typing ability across 1,500 sentences (Dhakal et al. 2018). Similar designs have also transformed robotics research: for example, the ROBOTURK platform crowdsourced over 2,200 high-quality teleoperated demonstrations in just 22 hours, generating more than 100 hours of data for optimizing surgical procedures (Mandlekar et al. 2018).

1.2 Advantage 2: Designs with many timepoints

Online crowdsourcing is particularly well-suited for longitudinal studies. Large cohorts can be readily recruited for multi-session participation, and retention rates often rival, or even surpass, those of traditional studies lasting months or years (Kothe and Ling 2019). For example, a recent study tracked first-person shooter performance across 100 days of practice (Listman et al. 2021), far exceeding the typical 5-day span of in-lab skill learning studies (e.g., Shmuelof, Krakauer, and Mazzoni 2012).

1.3 Advantage 3: Designs with specific populations

Online recruitment makes it possible to access specific, hard to reach demographics that are often difficult to recruit, or even unattainable, through traditional in-lab methods (Smith et al. 2015). Many participant pools and citizen science approaches enable selective recruitment by demographics, making it straightforward to target specific populations, for example, video game players (Hyde et al. 2025), left-handed individuals, or individuals reporting neuropsychiatric symptoms (Atkinson et al. 2025; Barack et al. 2024). Additionally, online testing makes it convenient to recruit rare patient groups and find closely-matched controls (Tsay, Chandy, et al. 2024; Tsay, Schuck, and Ivry 2023). Remote testing can also reach infant populations that are logistically difficult to bring into the lab (Raz et al. 2024).

1.4 The principle in action

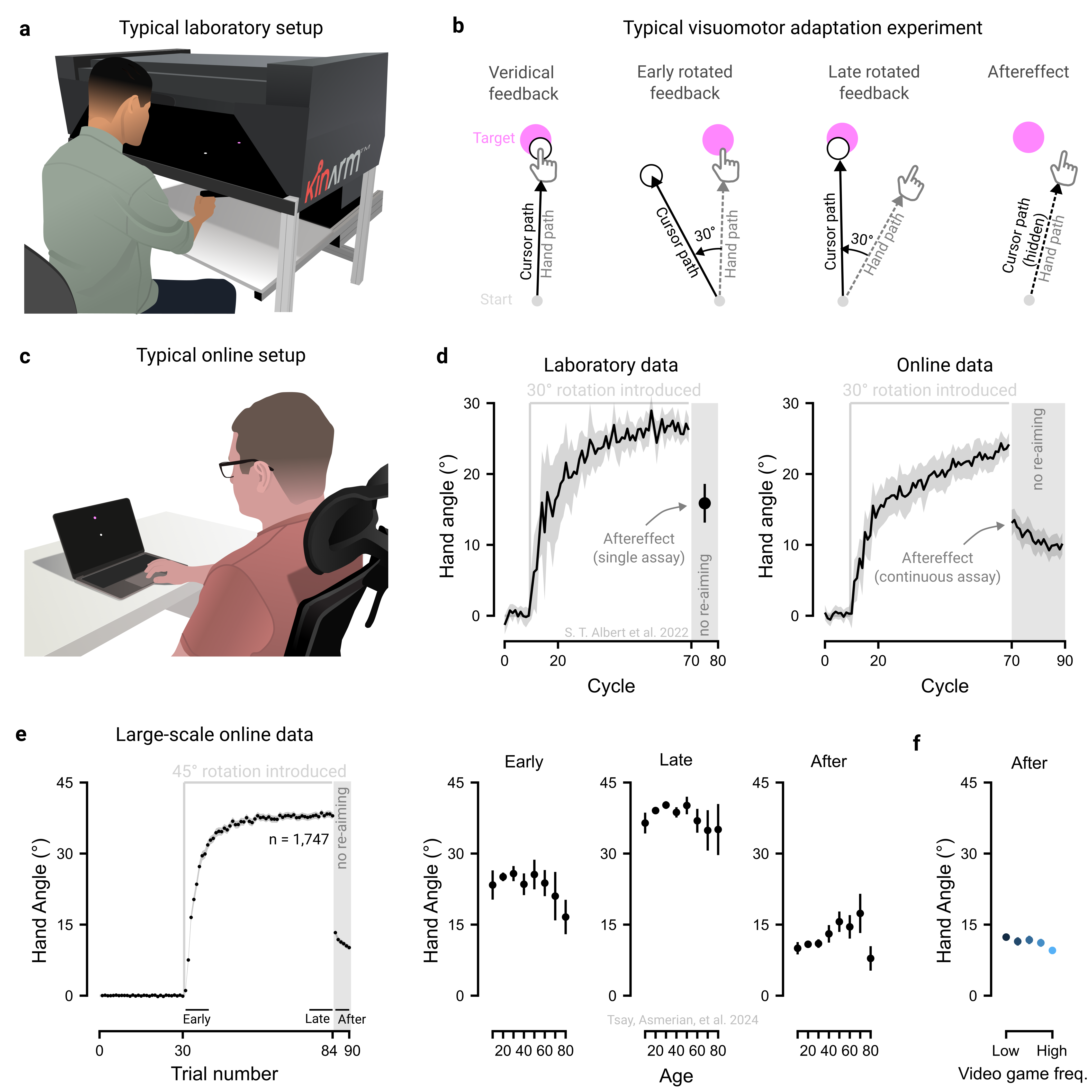

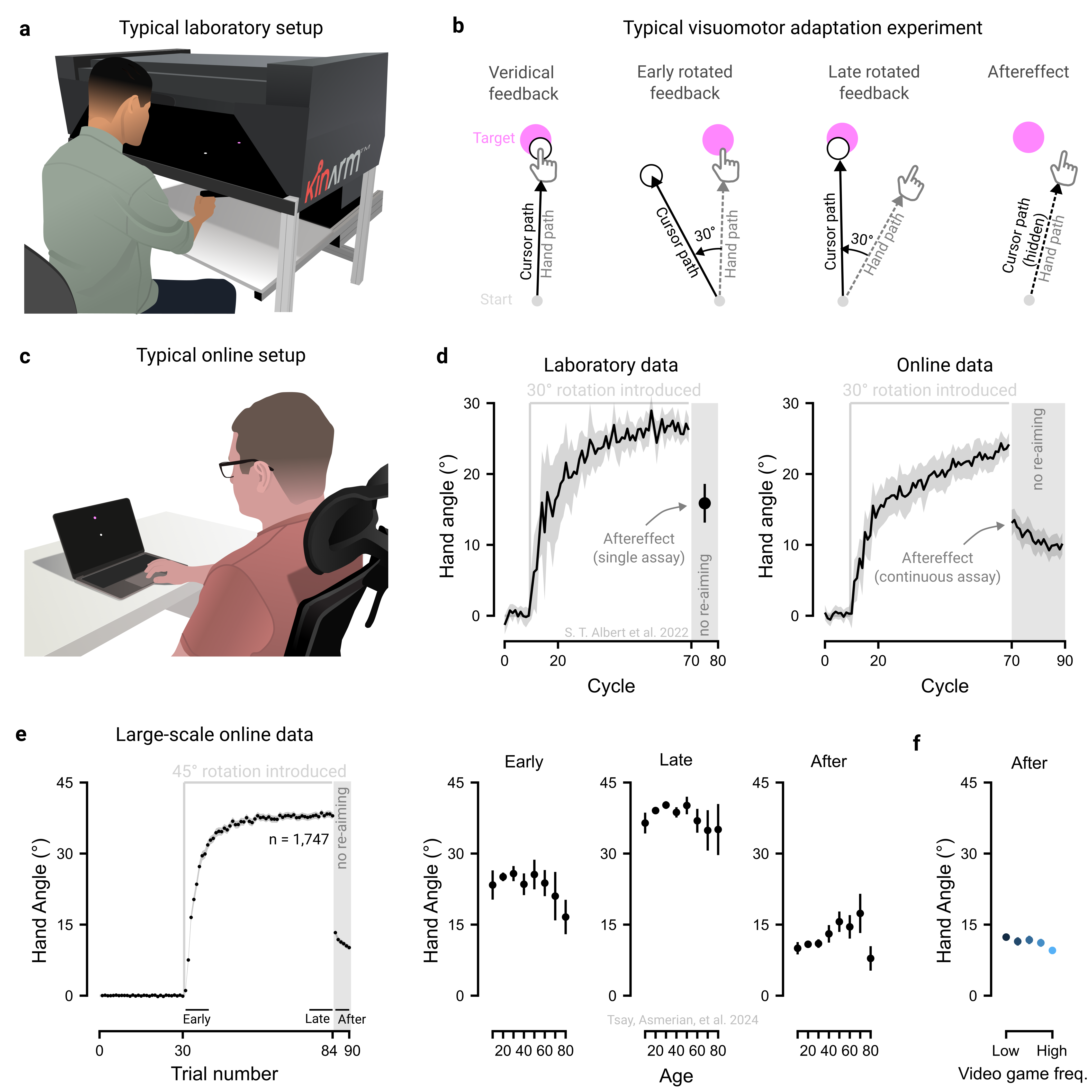

Motor adaptation, the process of correcting movement errors in response to changes in the body (e.g., muscle fatigue) and environment (e.g., a new ping-pong paddle), is typically studied in controlled laboratory settings using specialized equipment, such as robotic manipulanda (Figure 1.1a). In a typical visuomotor adaptation experiment, participants are asked to use their hand to control an on-screen cursor and to use it to reach towards a target (Figure 1.1b). After some period of time with veridical feedback, a visuomotor rotation (e.g., 45°) is introduced between the hand and cursor movements. Participants adjust by changing their hand’s movement angle in the opposite direction of the rotation, gradually aligning the cursor with the target, a process driven by both implicit and explicit motor learning processes.

Motor learning researchers are often interested in isolating different components of learning. For example, to isolate implicit processes underlying motor adaptation, participants are instructed to forgo their re-aiming strategy and reach straight to the target. Yet, participants often continue to move in an adapted manner (e.g., reaching ~15° clockwise of their intended movement direction). This residual ‘aftereffect’, a measure of implicit motor adaptation, indicates that the sensorimotor map has been recalibrated outside of conscious awareness (Doya 2000; H. E. Kim, Avraham, and Ivry 2021; Krakauer et al. 2019; Shadmehr and Krakauer 2008; Wolpert, Diedrichsen, and Flanagan 2011).

However, motor learning studies typically involve small, homogenous samples, raising concerns about the robustness and generalizability of findings beyond the laboratory. To overcome these limitations, many laboratories have recently turned to online crowdsourcing to complement traditional laboratory testing (Albert et al. 2022; Barradas, Koike, and Schweighofer 2024; Cesanek et al. 2021; Coltman et al. 2021; O. A. Kim, Forrence, and McDougle 2022; Shyr and Joshi 2024; Wang et al. 2024; Warburton et al. 2025; Watral et al. 2023; Weightman et al. 2022). These online tasks are designed to be simple and intuitive, involving standard software (e.g., Google Chrome) and hardware (e.g., the participant’s own trackpad or computer mouse; Figure 1.1c). Reassuringly, data collected online has a strong resemblance to data collected in the laboratory (Figure 1.1d; Albert et al. (2022)).

Furthermore, the expansive sample sizes in web-based studies can enable us to chip away at longstanding controversies in motor learning literature (Tsay, Asmerian, et al. 2024). For example, lab-based studies with smaller samples have produced mixed findings on the effects of aging – some report preserved motor adaptation, others declines, and still others enhancements (see meta-analysis in Cisneros et al. (2024)). In contrast, large-scale web-based datasets (n > 1500) reveal a clearer pattern: an inverted-U relationship between age and both early and late adaptation, peaking between ages 35 and 45, along with increased aftereffects in older adults (Figure 1.1e). As such, studies that sample different segments of the same inverted-U curve can yield seemingly contradictory results.

Moreover, web-based approaches have uncovered previously unrecognized constraints on motor adaptation, showing that factors often ignored in lab studies – such as gaming habits, computer use, and motivation – can meaningfully shape learning (Figure 1.1f). Together, these crowdsourced findings reveal the hidden landscape of sensorimotor diversity and open new avenues for future research in this domain.