4 Validate your experiment

When launching an online study, researchers often worry whether the experiment will “work” - that is, whether technical or behavioral constraints hinder implementation or whether lab findings generalize to an online format. To address these concerns, we recommend incorporating brief tests that confirm the online task runs as intended and reproduces known effects (‘validation checks’) before extending the basic effect online.

There are several ways to verify whether an experiment works online. One is to replicate a well-established in-person finding in an online format – either by directly reproducing an existing study or by embedding a validation condition that tests whether a known behavioral signature appears in the online setting successful online replications of the classic in-lab effects, such as the Stroop effect, have paved the way for many online extensions (Barnhoorn et al. 2015; Crump, McDonnell, and Gureckis 2013). Another approach is to adopt a hybrid strategy, collecting both in-lab and online data within the same experiment and directly comparing the effect sizes between settings (Dandurand, Shultz, and Onishi 2008; Germine et al. 2012; Sauter, Stefani, and Mack 2022; Semmelmann and Weigelt 2017; Uittenhove, Jeanneret, and Vergauwe 2023).

However, pinpointing the exact cause for behavioral differences between in-person and online settings can be challenging. Differences in technical setups (e.g., devices and screen refresh rate) and participant characteristics (e.g., demographics and engagement) can all contribute to discrepancies. For instance, the mixed success in replicating masked priming online, with success in some studies (Angele et al. 2022; Barnhoorn et al. 2015) but not others (Crump, McDonnell, and Gureckis 2013), likely reflects differences in experimental design, software, or hardware, given the effect’s dependence on millisecond-level precision. Failures to replicate online may also reflect limitations of the original in-lab studies, requiring researchers to weigh how much time and effort is warranted to identify the cause.

4.1 The principle in action

We have adopted a ‘replicate first’ approach to evaluate the feasibility of conducting motor control experiments online. Movement data collected remotely using a computer mouse or trackpad reliably reproduce several benchmark effects observed in laboratory settings, including the scaling of movement time and movement speed with target distance (“Fitts Law”) and directional reach biases across the angular workspace (Wang et al. 2024; Warburton et al. 2023), observations that date back at least 60 years (Begbie 1959; Brown and Slater-Hammel 1949; Fitts 1954). Moreover, participants exhibit canonical visuomotor adaptation profiles online (Coltman et al. 2021; Tsay et al. 2021; Tsay et al. 2024), along with classic sensorimotor learning phenomena such as savings, anterograde interference, and spontaneous recovery (Jang, Shadmehr, and Albert 2023). These validation efforts provide confidence in the viability of online studies to study motor control and adaptation.

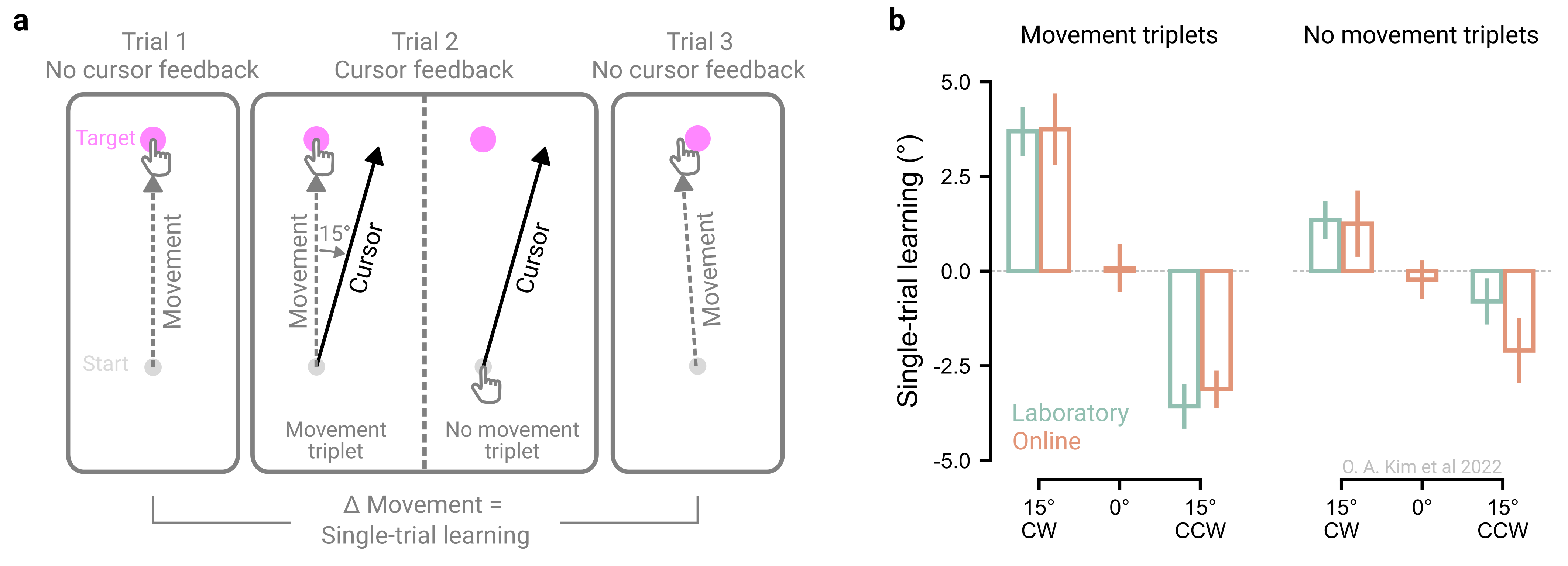

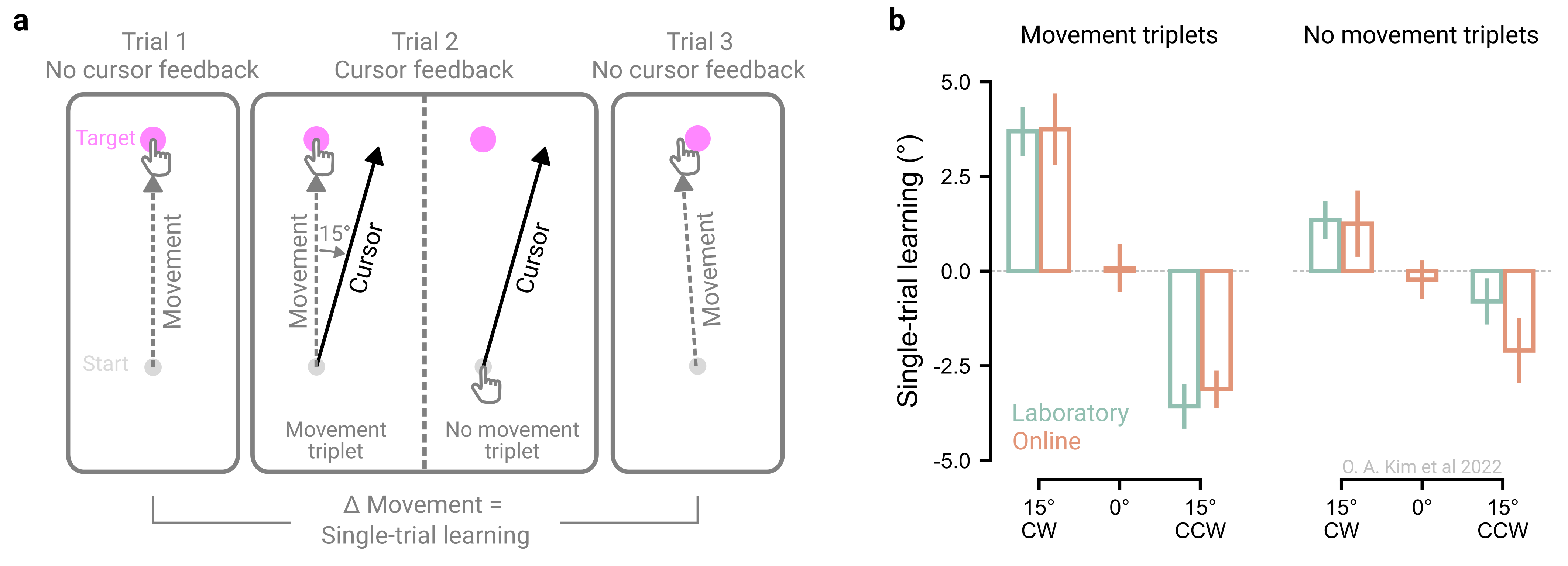

Others have employed a hybrid approach to test more novel effects, such as one-shot motor learning from withheld movements, by directly comparing performance in the lab and online (Kim, Forrence, and McDougle 2022). In this experiment, movements across trials were organized into ‘triplets’, where a trial with perturbed visual feedback (15° visuomotor rotation) was flanked by two no-feedback trials. Single trial learning was measured as the change in movement angle between the two flanking no-feedback trials (Figure 4.1a). Additionally, two conditions were tested: a ‘movement’ triplet, in which participants saw rotated cursor feedback relative to their executed movement, and a ‘no-movement’ triplet, in which feedback was referenced to the planned movement because participants were instructed to rapidly inhibit movement execution.

Strikingly, robust one-shot motor learning was observed for both triplet types in both online (computer mouse or trackpad) and laboratory (robotic manipulandum) settings. Learning magnitudes were also strikingly similar across settings (Figure 4.1b). This hybrid approach demonstrated that motor adaptation, whether involving physical movement or not, is robust and generalizable across settings.