Careful behavioral observations can reveal the intricate workings of the human mind (Krakauer et al. 2017; Niv 2021). For example, how vigorously we move betrays our preferences: we walk more briskly when meeting a close friend and drag our feet when heading to an unwelcome appointment (Shadmehr et al. 2019). Similarly, our behavior reflects our confidence: we might decisively grab our favorite drink at a breakfast buffet but tentatively hover between the different pastry options (Dotan, Meyniel, and Dehaene 2018).

For much of its history, behavioral research has been conducted in the laboratory setting, where strict oversight ensures consistency. Such experimental control ensures that the studied behaviors are not confounded by environmental factors, for example, by minimizing variability from external distractions that might otherwise alter participants’ responses. Moreover, in-lab testing grants access to specialized, often costly equipment – such as robotic manipulanda that can passively move the arm or apply perturbing forces – allowing researchers to precisely manipulate and measure behavior that would be difficult to capture otherwise.

However, laboratory experiments have notable limitations. They are time-intensive, typically allowing only a few participants to be tested at once, often leading to small, underpowered samples collected over restricted timeframes (Szucs and Ioannidis 2017). As a result, findings can be difficult to replicate (Cohen 1962; Marek et al. 2022; Open Science Collaboration 2015). Furthermore, laboratory studies often involve a homogeneous group of participants, for example ‘WEIRD’ individuals (Henrich, Heine, and Norenzayan 2010) or undergraduate students (Arnett 2008), which may limit the extent to which research findings generalize to the broader population (Gordon, Slade, and Schmitt 1986; Henrich, Heine, and Norenzayan 2010).

Behavioral researchers are increasingly moving their studies beyond the confines of the laboratory (Vallet and Van Wassenhove 2023). One way to do so is through field-based experiments, testing participants in classrooms, clinics, workplaces, or other real-world settings (e.g., Banerjee et al. 2025; Cullen and Oppenheimer 2024). Another way is crowdsourcing, the practice of recruiting large and diverse groups of individuals, which offers a compelling means of scaling behavioral research. By harnessing distributed participants, crowdsourcing overcomes many of the limitations of traditional in-person testing.

Crowdsourcing comes in many flavors. Some researchers leverage opportunity sampling at science fairs or museum exhibitions, combining the rigor of in-person testing with access to broader demographics (Clode et al. 2024; Das et al. 2025; Ruitenberg et al. 2023; Turner et al. 2023). However, the biggest boom in crowdsourced research has been the use of online experiments, where participants complete experiments remotely, using personal devices such as computers (Reips 2001), phones (Coutrot et al. 2018), or virtual reality headsets (Cesanek et al. 2024).

What are the advantages of online behavioral experiments? Unlike in-person studies, online experiments can be accessed by many individuals simultaneously, significantly reducing the time required for data collection (Reips 2000). Additionally, this efficiency allows researchers to tackle questions that would be impractical or prohibitively resource-intensive for traditional lab settings – for example, investigating how a given psychological function varies continuously with age, rather than relying on categorical comparisons between ‘younger’ and ‘older’ groups (Hartshorne and Germine 2015; Spiers, Coutrot, and Hornberger 2023; Tsay et al. 2024). Not to mention that the large sample sizes provide greater statistical power to detect meaningful behavioral effects and monitor changes over time, thereby enhancing the likelihood of replicable findings (Johnson et al. 2022).

If a central goal of behavioral research is to uncover human universals, then crowdsourcing offers a powerful advantage: access to a more diverse and representative sample than is typically available in laboratory settings (Casler, Bickel, and Hackett 2013; Gosling et al. 2010; Hartshorne et al. 2019; Smith et al. 2015). That is, researchers can use online crowdsourcing to chart the hidden landscape of perceptual, cognitive, and motor diversity in the population. Together, these advantages have established online experiments as a staple in the modern researcher’s toolbox, enabling unprecedented insights into human behavior across a wide range of subfields.

However, online experiments come with notable trade-offs – most prominently, the loss of experimental control. First, researchers sacrifice some control over hardware, since participants typically use their own devices, introducing variability in stimulus presentation times, response times, and the peripherals used to make responses (Bridges et al. 2020). When not appropriately handled, these factors may introduce misleading or spurious correlations (Pronk et al. 2020). Second, researchers sacrifice some control over their participants. It is often difficult to know whether participants understand the instructions and/or remain engaged throughout the task, and if unaddressed, these issues can compromise data quality and yield misleading conclusions.

Ten principles for crowdsourcing behavioral experiments online

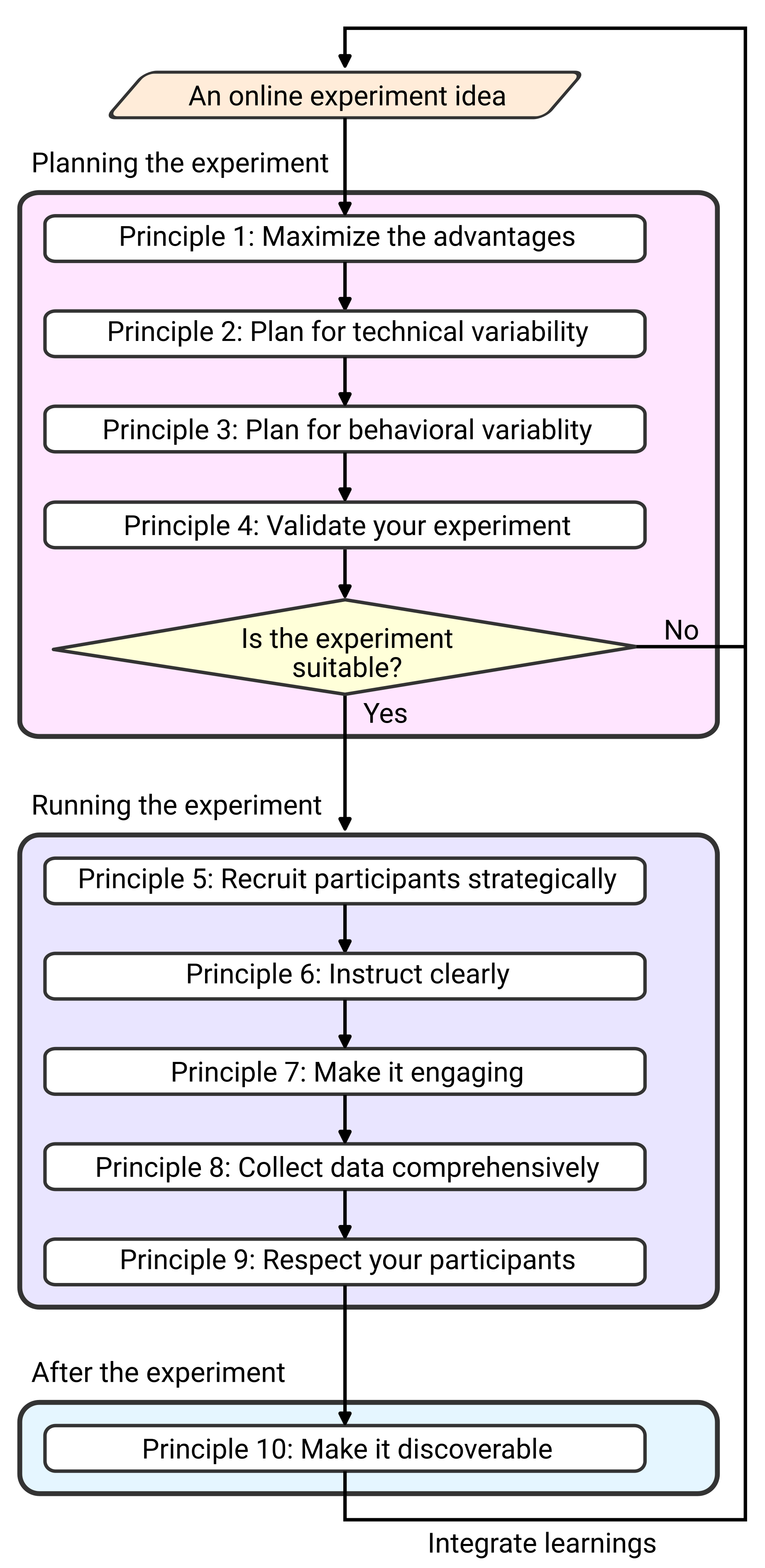

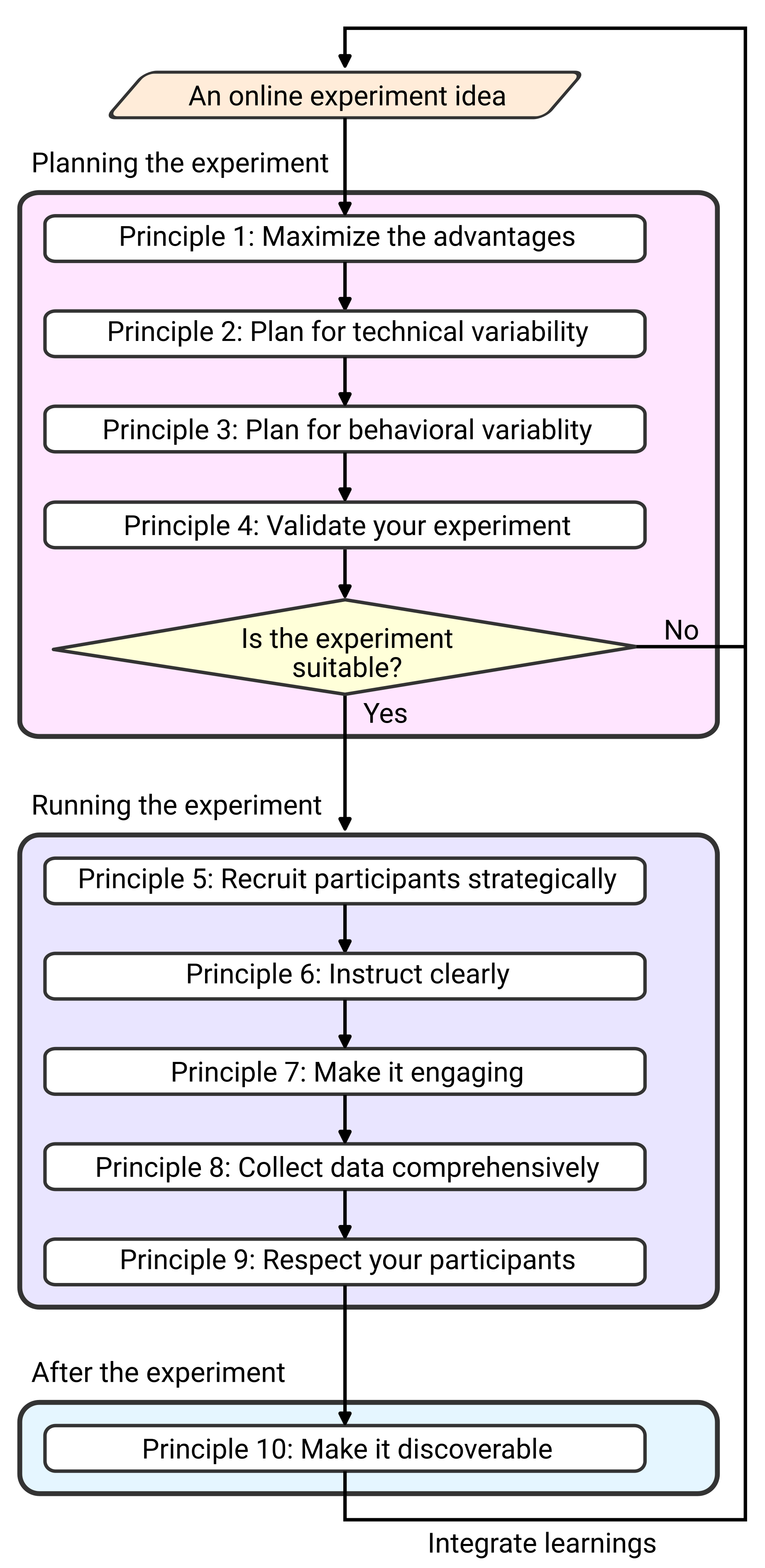

To tackle these challenges, we present a beginner-friendly, practical guide to conducting high-quality crowdsourced behavioral experiments online (Figure 1). Rather than focusing on implementation details, we distil ten principles that provide a structured framework for optimizing online study design, evaluating data quality, and mitigating common pitfalls. Each principle is grounded in concrete examples from crowdsourced motor control and learning studies, a domain that places stringent demands on experimental control and behavioral measurement. Demonstrating success under these conditions establishes both the feasibility and broad applicability of the ten principles across behavioral domains and crowdsourcing platforms.

Arnett, Jeffrey J. 2008.

“The Neglected 95%: Why American Psychology Needs to Become Less American.” American Psychologist 63 (7): 602–14.

https://doi.org/10.1037/0003-066X.63.7.602.

Banerjee, Abhijit V., Swati Bhattacharjee, Raghabendra Chattopadhyay, Esther Duflo, Alejandro J. Ganimian, Kailash Rajah, and Elizabeth S. Spelke. 2025.

“Children’s Arithmetic Skills Do Not Transfer Between Applied and Academic Mathematics.” Nature 639 (8055): 673–81.

https://doi.org/10.1038/s41586-024-08502-w.

Bridges, David, Alain Pitiot, Michael R. MacAskill, and Jonathan W. Peirce. 2020.

“The Timing Mega-Study: Comparing a Range of Experiment Generators, Both Lab-Based and Online.” PeerJ 8 (July): e9414.

https://doi.org/10.7717/peerj.9414.

Casler, Krista, Lydia Bickel, and Elizabeth Hackett. 2013.

“Separate but Equal? A Comparison of Participants and Data Gathered via Amazon’s MTurk, Social Media, and Face-to-Face Behavioral Testing.” Computers in Human Behavior 29 (6): 2156–60.

https://doi.org/10.1016/j.chb.2013.05.009.

Cesanek, Evan, Sabyasachi Shivkumar, James N. Ingram, and Daniel M. Wolpert. 2024.

“Ouvrai Opens Access to Remote Virtual Reality Studies of Human Behavioural Neuroscience.” Nature Human Behaviour, April.

https://doi.org/10.1038/s41562-024-01834-7.

Clode, Dani, Lucy Dowdall, Edmund Da Silva, Klara Selén, Dorothy Cowie, Giulia Dominijanni, and Tamar R. Makin. 2024.

“Evaluating Initial Usability of a Hand Augmentation Device Across a Large and Diverse Sample.” Science Robotics 9 (90): eadk5183.

https://doi.org/10.1126/scirobotics.adk5183.

Cohen, Jacob. 1962. “The Statistical Power of Abnormal-Social Psychological Research: A Review.” The Journal of Abnormal and Social Psychology 65 (3): 145.

Coutrot, Antoine, Ricardo Silva, Ed Manley, Will De Cothi, Saber Sami, Véronique D. Bohbot, Jan M. Wiener, et al. 2018.

“Global Determinants of Navigation Ability.” Current Biology 28 (17): 2861–2866.e4.

https://doi.org/10.1016/j.cub.2018.06.009.

Cullen, Simon, and Daniel Oppenheimer. 2024.

“Choosing to Learn: The Importance of Student Autonomy in Higher Education.” Science Advances 10 (29): eado6759.

https://doi.org/10.1126/sciadv.ado6759.

Das, Anwesha, Alexandros Karagiorgis, Jörn Diedrichsen, Max-Philipp Stenner, and Elena Azañón. 2025.

“Micro-Offline Gains Do Not Reflect Offline Learning During Early Motor Skill Acquisition in Humans.” Proceedings of the National Academy of Sciences 122 (44): e2509233122.

https://doi.org/10.1073/pnas.2509233122.

Dotan, Dror, Florent Meyniel, and Stanislas Dehaene. 2018.

“On-Line Confidence Monitoring During Decision Making.” Cognition 171 (February): 112–21.

https://doi.org/10.1016/j.cognition.2017.11.001.

Gordon, Michael E, L Allen Slade, and Neal Schmitt. 1986. “The "Science of the Sophomore" Revisited: From Conjecture to Empiricism.” The Academy of Management Review 11 (1): 191–207.

Gosling, Samuel D., Carson J. Sandy, Oliver P. John, and Jeff Potter. 2010.

“Wired but Not WEIRD: The Promise of the Internet in Reaching More Diverse Samples.” Behavioral and Brain Sciences 33 (2–3): 94–95.

https://doi.org/10.1017/S0140525X10000300.

Hartshorne, Joshua K., Joshua R. De Leeuw, Noah D. Goodman, Mariela Jennings, and Timothy J. O’Donnell. 2019.

“A Thousand Studies for the Price of One: Accelerating Psychological Science with Pushkin.” Behavior Research Methods 51 (4): 1782–1803.

https://doi.org/10.3758/s13428-018-1155-z.

Hartshorne, Joshua K., and Laura T. Germine. 2015.

“When Does Cognitive Functioning Peak? The Asynchronous Rise and Fall of Different Cognitive Abilities Across the Life Span.” Psychological Science 26 (4): 433–43.

https://doi.org/10.1177/0956797614567339.

Henrich, Joseph, Steven J. Heine, and Ara Norenzayan. 2010.

“The Weirdest People in the World?” Behavioral and Brain Sciences 33 (2–3): 61–83.

https://doi.org/10.1017/S0140525X0999152X.

Johnson, Brian P., Eran Dayan, Nitzan Censor, and Leonardo G. Cohen. 2022.

“Crowdsourcing in Cognitive and Systems Neuroscience.” The Neuroscientist 28 (5): 425–37.

https://doi.org/10.1177/10738584211017018.

Krakauer, John W., Asif A. Ghazanfar, Alex Gomez-Marin, Malcolm A. MacIver, and David Poeppel. 2017.

“Neuroscience Needs Behavior: Correcting a Reductionist Bias.” Neuron 93 (3): 480–90.

https://doi.org/10.1016/j.neuron.2016.12.041.

Marek, Scott, Brenden Tervo-Clemmens, Finnegan J. Calabro, David F. Montez, Benjamin P. Kay, Alexander S. Hatoum, Meghan Rose Donohue, et al. 2022.

“Reproducible Brain-Wide Association Studies Require Thousands of Individuals.” Nature 603 (7902): 654–60.

https://doi.org/10.1038/s41586-022-04492-9.

Niv, Yael. 2021.

“The Primacy of Behavioral Research for Understanding the Brain.” Behavioral Neuroscience 135 (5): 601–9.

https://doi.org/10.1037/bne0000471.

Open Science Collaboration. 2015.

“Estimating the Reproducibility of Psychological Science.” Science 349 (6251): aac4716-1 - aac4716-8.

https://doi.org/10.1126/science.aac4716.

Pronk, Thomas, Reinout W. Wiers, Bert Molenkamp, and Jaap Murre. 2020.

“Mental Chronometry in the Pocket? Timing Accuracy of Web Applications on Touchscreen and Keyboard Devices.” Behavior Research Methods 52 (3): 1371–82.

https://doi.org/10.3758/s13428-019-01321-2.

Reips, Ulf-Dietrich. 2000.

“The Web Experiment Method.” In

Psychological Experiments on the Internet, 89–117. Elsevier.

https://doi.org/10.1016/B978-012099980-4/50005-8.

———. 2001.

“The Web Experimental Psychology Lab: Five Years of Data Collection on the Internet.” Behavior Research Methods, Instruments, & Computers 33 (2): 201–11.

https://doi.org/10.3758/BF03195366.

Ruitenberg, Marit F. L., Vincent Koppelmans, Rachael D. Seidler, and Judith Schomaker. 2023.

“Developmental and Age Differences in Visuomotor Adaptation Across the Lifespan.” Psychological Research 87 (6): 1710–17.

https://doi.org/10.1007/s00426-022-01784-7.

Shadmehr, Reza, Thomas R. Reppert, Erik M. Summerside, Tehrim Yoon, and Alaa A. Ahmed. 2019.

“Movement Vigor as a Reflection of Subjective Economic Utility.” Trends in Neurosciences 42 (5): 323–36.

https://doi.org/10.1016/j.tins.2019.02.003.

Smith, Nicholas A., Isaac E. Sabat, Larry R. Martinez, Kayla Weaver, and Shi Xu. 2015.

“A Convenient Solution: Using MTurk To Sample From Hard-To-Reach Populations.” Industrial and Organizational Psychology 8 (2): 220–28.

https://doi.org/10.1017/iop.2015.29.

Spiers, Hugo J., Antoine Coutrot, and Michael Hornberger. 2023.

“Explaining World‐Wide Variation in Navigation Ability from Millions of People: Citizen Science Project Sea Hero Quest.” Topics in Cognitive Science 15 (1): 120–38.

https://doi.org/10.1111/tops.12590.

Szucs, Denes, and John P. A. Ioannidis. 2017.

“Empirical Assessment of Published Effect Sizes and Power in the Recent Cognitive Neuroscience and Psychology Literature.” Edited by Eric-Jan Wagenmakers.

PLOS Biology 15 (3): e2000797.

https://doi.org/10.1371/journal.pbio.2000797.

Tsay, Jonathan S., Hrach Asmerian, Laura T. Germine, Jeremy Wilmer, Richard B. Ivry, and Ken Nakayama. 2024.

“Large-Scale Citizen Science Reveals Predictors of Sensorimotor Adaptation.” Nature Human Behaviour, January.

https://doi.org/10.1038/s41562-023-01798-0.

Turner, Christopher, Satu Baylan, Martina Bracco, Gabriela Cruz, Simon Hanzal, Marine Keime, Isaac Kuye, et al. 2023.

“Developmental Changes in Individual Alpha Frequency: Recording EEG Data During Public Engagement Events.” Imaging Neuroscience 1 (August): 1–14.

https://doi.org/10.1162/imag_a_00001.

Vallet, William, and Virginie Van Wassenhove. 2023.

“Can Cognitive Neuroscience Solve the Lab-Dilemma by Going Wild?” Neuroscience & Biobehavioral Reviews 155 (December): 105463.

https://doi.org/10.1016/j.neubiorev.2023.105463.